101 Humans of New York on the Risks of AI

Nobody has ever done an in person door to door survey about AI risks1. What do people really think about AI? Like really? There have been some surveys on the risks from AI. But there’s a real difference between looking at numbers on page vs. the feeling of talking to our fellow humans.

I2 asked 101 people what they thought about the impact of AI. Approximately half of the responses were from ringing doorbells, and half were from asking people out and about3. Every single one of these was in person face to face. Around 10 respondents only spoke Spanish and were surveyed in Spanish.

Here are the results. The top level results are very strong when it comes to showing interest in regulating AI. Everything else will be reflections on the results/process and all the qualitative data I picked up from this process.

Thoughts on some Specific Questions

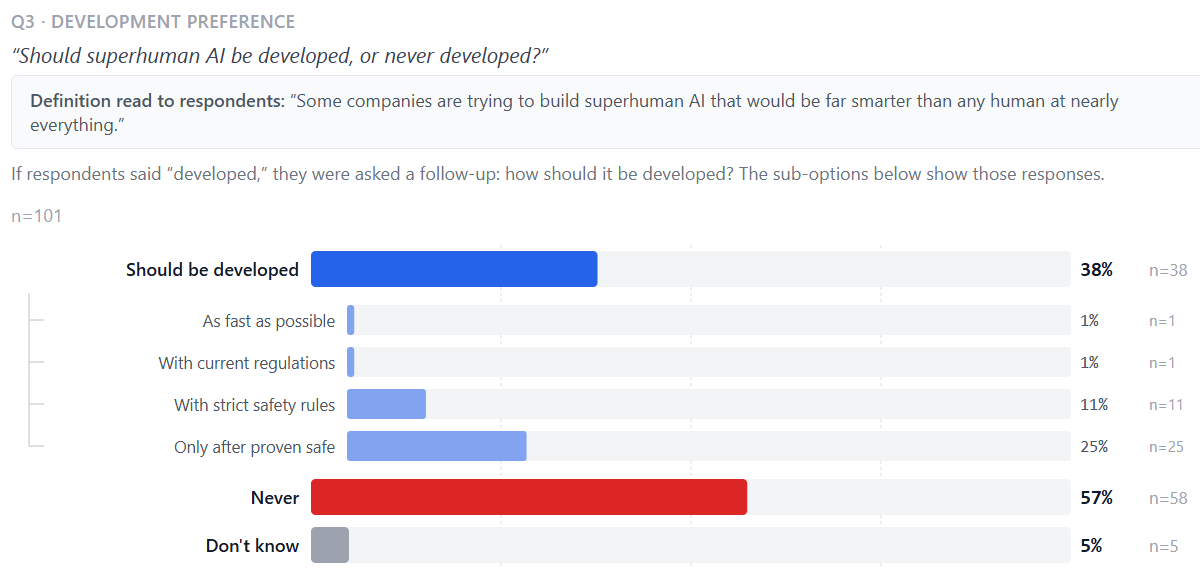

This question was the longest and most complex question. I basically have to read out all of the options since it's not on a simple scale. I decided to first split it on whether someone thinks superhuman AI should be developed at all, and then only give the more granular options if they do think it should be developed. This difference in methodology could explain the difference between my results and FLI results, but it seems unlikely because even still the vast majority of respondents who thought it should be developed still thought it should be developed with serious regulation. It’s possible the initial framing of should or should not be developed biased the responses for the subsets of “Should be developed”. Overall these results are extremely strong though. Of the 96 people who answered with an opinion, only 2 of them thought it should be with current regulations or as fast as possible.

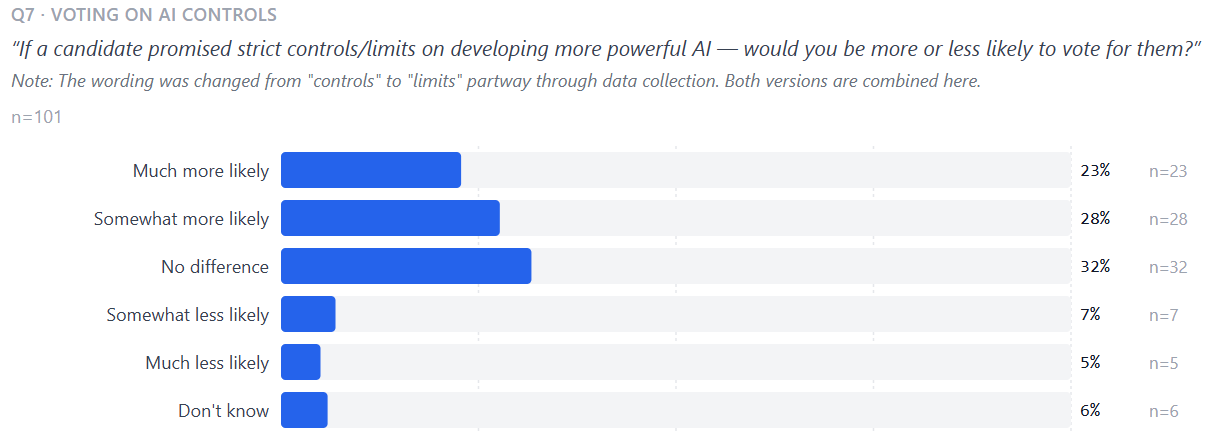

This question was terrible for a couple reasons. First people were frequently confused the “limits on developing more powerful AI” clause is just brutal. This was frequently misunderstood and my model is basically people just remember the most recent thing, so they simply remembered “more powerful AI” and discarded the limits part. People would be like yeah I am super worried about AI I hate it I never use it and then answer “much less likely”. I eventually ended up repeating “limits on developing more powerful AI” two times to try to help, but I think this was a good lesson on you want the absolute simplest question construction possible. There is just huge variance in reading comprehension and limiting that as a confounding factor is really important. Similarly, I started with “controls on developing more powerful AI” and I had at least 1 person interpret that as the politicians getting control over the AIs themselves. I tried to be quite careful with my wording, and this is part of why I made the simplest explanation of superhuman AI I could think of for question 3, but even still the takeaway here is when designing surveys you want basically no words that alter meaning, especially if they are farther away in the sentence. This question was also interesting in that it also got confounded by just general anti-politician sentiment. Some people answered less likely on the grounds that you simply can’t trust politicians at all. I think likely if I were to do this again I would simplify and ask if they would support a law instead of support a politician4. There has been very little published surveying on the salience of AI risk to electability, so I am still happy I included this question.

Takeaways

Very few people are excited about AI. Almost everyone is worried about superhuman5 AI becoming too powerful for humans to control. The vast majority support slower development, international treaties limiting AI, and are just generally worried. People are generally worried about AI, even some of the people who said they were more excited than concerned about AI still frequently expressed worries especially in the context of questions about superhuman AI. My vibes based read is many of the people who are more excited are more excited because they don’t think superhuman AI is actually possible, but then when given hypotheticals about superhuman AI they are still worried about it/don’t want it to be developed6.

I did a pretty good job of getting a sample that is relatively representative of the US along the axes of Ethnicity/Income/Education/Politics, however there is certainly bias from only getting responses in NY/NJ. That being said the responses are generally very indicative of AI risk being both salient and politically advantageous (at least when it comes to electability) for both D and R candidates to try to regulate further.

Surveying is Inherently Biased

I had never canvassed before, it is crazy how many ways there are for bias to sneak in.

The type of person who will respond to a surveyor at their door.

It’s protected to ring someone’s doorbell, it’s not as clear whether it is legally protected to enter an apartment building and knock on doors. I didn’t do any canvassing of apartment buildings. I was mostly okay with this because the US as a whole is way less dense than NYC so going to less dense neighborhoods is likely more representative.

Time of day, I tried going before 4pm once and you basically only get people who are above working age. When canvassing on a weekday I for the most part stuck with canvassing 3:30-7pm to try to get a mix of working aged and not working aged people. The best time of day is after 5pm because then you can start to select houses based on which ones visibly have lights on7.

How I give options. I found that when I give the question and then list off the answers that frequently confuses8 the person being surveyed, so for most of the questions the best flow is to ask the question, wait to see what they say and then give the 2/3 closest options and let them pick between them. This helps remove the bias of the ordering of the answers, but it does mean that there is bias in which options I chose in the moment to give to the person based on my understanding of their answer

Sometimes the people clearly don’t understand the questions. I get into this more when I reflect on the questions I picked, but this adds bias in that if someone says I hate AI and then answers a question in a way that is inconsistent then I have to choose to be like are you sure, about that let me help you understand this question. So, if I am more attuned to certain confusions or more likely to clarify for certain answers that adds another source of bias.

How I say the questions. Do I emphasize a certain answer. Some people just do not answer the question. They just sort of ramble and go off on a tangent, and then when I give them options they simply will not pick one. Do I use my judgement and pick the closest option or say don’t know. I usually picked the option that I thought was most representative of what they said, but this is a judgement call that I am making.

Some of the respondents had never heard of AI before, so how I described it9 impacted their responses.

Does my bias leak out? I was generally quite careful to open with “I have a survey about the impact of AI on all of us”, instead of something like “on the risks" of AI. If the person being surveyed thinks I am angling to get a certain type of answer that will both change the chance they decide to take the survey and potentially change their answers if they are trying to make me happy. However some people wanted lots of information about who I am/why I am doing this before they would talk to me. My answers were very much along the lines of “I am an independent researcher surveying about AI. I think AI is very important and it’s important to know what people think about it. Nobody has ever done an in person door to door survey on AI before. I hope to publish this.” Even just the way questions are phrased and the types of questions being asked leaks information about my bias, and this is hard to account for.

What do I do with various clarifying questions, what do I do if someone stops answering questions halfway and starts trying to figure out what I think? At the end of the day this is in person and they can decide to do whatever they want. This also means how willing I am to stay has an impact on the data. If I give up on people who behave in weird or harder to interpret ways then I am under sampling that type of person.

People are Weird and Surprising

The beauty of talking to people in person is you get tons of qualitative data to go along with each response. Here are some interesting things that happened:

Someone when talking about the potential for losing control of superhuman AI. Said something along the lines of “I believe in God. If God wills it then AI should have more control”.

I had someone firmly disagree with the concept of the questions being answerable. “Why does it matter whether I think AI should be developed, that’s like asking whether I want the grass to be pink. AI already exists just like crypto it will just keep existing.” “If 99% of people wanted no more AI it wouldn’t make a difference since it will always be developed by rogue actors10.” This person was the more extreme version of a couple other people who for whatever reason simply can’t or won’t answer a hypothetical. This is an extremely hard person to survey and I mostly just ended up putting don’t know for the relevant responses.

There a couple people who were concerned about AI, but still against treaties or politicians who try to limit AI. The general sentiment was basically you can’t trust other countries and you can’t trust politicians. This is always interesting to me since it seems like it should imply they are just against any legislation or treaty regardless of what it is about.

A couple people explicitly talked about how they were worried for either their or their kids lives.

I had someone who knew google top level results used AI, and who mentioned ChatGPT, but then on the 3rd or 4th question went totally off the deep end. She talked about how she’s not worried about AI and I shouldn’t be either as long as I believe in the 12th dimension and above. Apparently humans are 12+ dimensional beings and everything that will happen has already happened in the 12th+ dimension, so AI has already been around for thousands of years and will continue to be around for thousands more years. Some people just have wild out there beliefs11.

One of the doorbells I rang just so happened to be a CTO, that was fun. He was generally concerned, pro regulation, pro treaty, etc. It approximately seemed like the people who used AI the most and had the most knowledge were more concerned.

You Can Do This Too!

I built the survey app as a PWA (progressive web app) that works offline anywhere. I would strongly recommend trying this out yourself if you care about AI and enjoy walking around outside. Doorbells can be kind of depressing so I wouldn’t suggest that for your first time, but if you go to a park on a nice day12 it’s super easeful! Just walk up and ask whoever looks like they might be open to chat, the worst that happens is they say no! If you want it to be even more fun do it with13 a friend14, now you’re walking around a park on beautiful day with someone you like talking to occasionally getting to hear wild takes from strangers on AI!

It’s easy for me to add you as a canvasser, all you have to do is:

DM me with the username and optionally password15 that you want.

Once I let you know you’re setup you can login at https://peopleonai.com/survey with your credentials.

Read the get started guide16 and (optionally) add the page to your home screen

Go survey some people!

As far as Claude can tell at least.

along with two friends of mine.

Primarily in parks, but also on the street.

Although I’m not really sure since part of the hope in asking a question specifically voter preferences is that it’s more compelling to a politician taking on an issue if they know it will help them get elected instead of knowing that people support that type of law in general.

Defined as: “Some companies are trying to build superhuman AI that would be far smarter than any human at nearly everything.”

There were a couple people who didn’t answer the question on whether superhuman AI should be developed because they didn’t think it was possible and refused to engage with the hypothetical.

But of course this does add a little bit of bias, there might be some sort of systematic bias between the type of person who turns lights on (or doesn’t fully block out their windows, etc) and the type of person who doesn’t.

Huge range of education, intelligence, etc among respondents

“A computer that does the thinking of humans, like chatgpt, self driving cars, siri, or alexa.” Self driving cars was frequently the thing that was most helpful/understandable. It’s also kind of scary that there are people who have never heard of AI before, but they are certainly still seeing AI generated content on social media etc.

When I talked about how if AI was banned it would be developed far slower since it requires lots of capital and developers etc, he was like yeah that’s irrelevant.

And frequently this is also paired with never actually answering a question or getting mad at me for asking them to specifically choose one of the options. This is a case where I really have to use my best judgement to figure out which answer is most representative of the wild things coming out of their mouth.

Ideally on a weekend, but even on weekdays there can be plenty of people around if it’s nice out.

Thoughts from friend 1: “I had a lot of fun going canvassing around Sunset Park today. I have canvassed for a political candidate before and that felt a lot more standardized than this. Consistently, as we were asking people in the park today about AI, they were surprised and intrigued by the questions. People have lots of opinions, and it seems like some of them jump at the opportunity to have their voice heard. I was particularly intrigued by some of the responses that we got. I know I live in a bubble of over-educated and technologically literate people, but it’s still surprising to talk to people who have never used AI, let alone never have even heard of what it means for something to be “artificially” intelligent. It was also a really great opportunity to be forced to practice my Spanish in a community where 90% of the people we spoke to did not seem to speak English.”

Thoughts from friend 2: “There were responses to some questions that I expected would lead to certain responses on other questions but sometimes I was surprised by what I perceived as lack of cohesion in the logic of people’s answers. It was fun to walk around with you and hang out in a neighborhood I’m never in.”

If you don’t, I will auto-generate a password for you.

Inside the app, it’s very simple